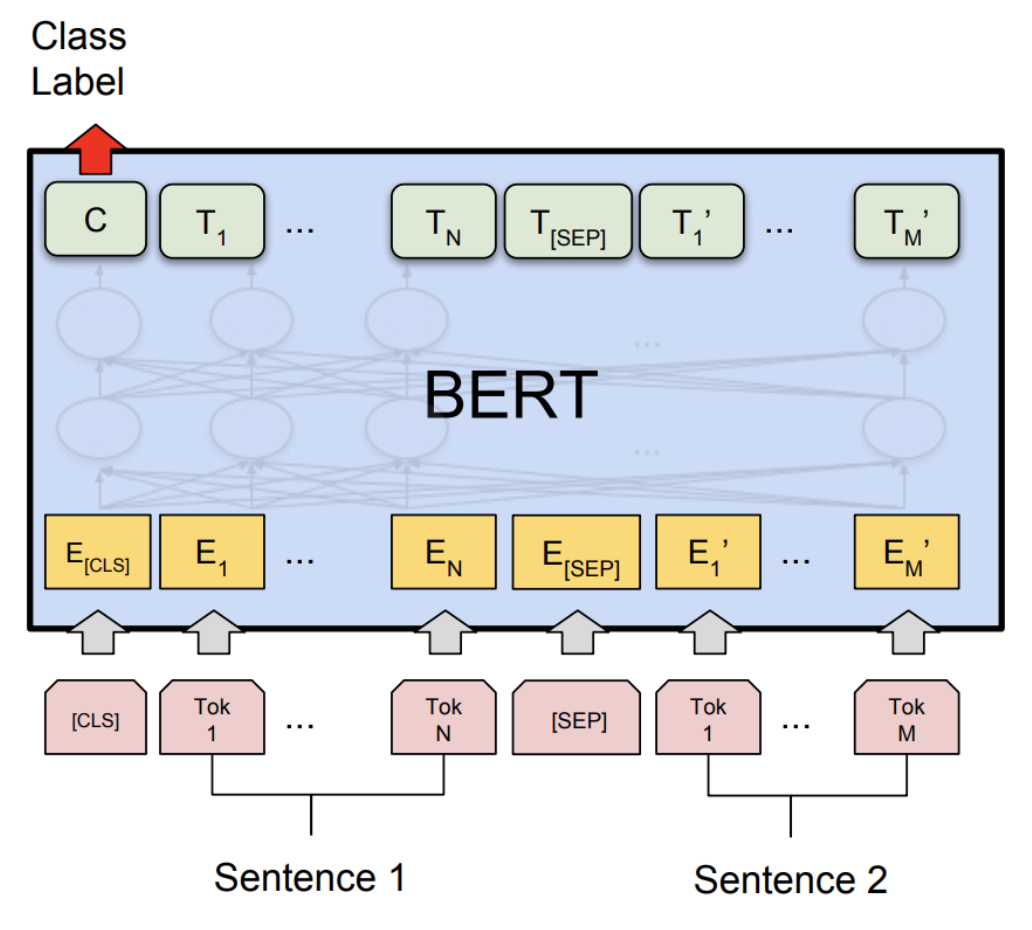

3: A visualisation of how inputs are passed through BERT with overlap... | Download Scientific Diagram

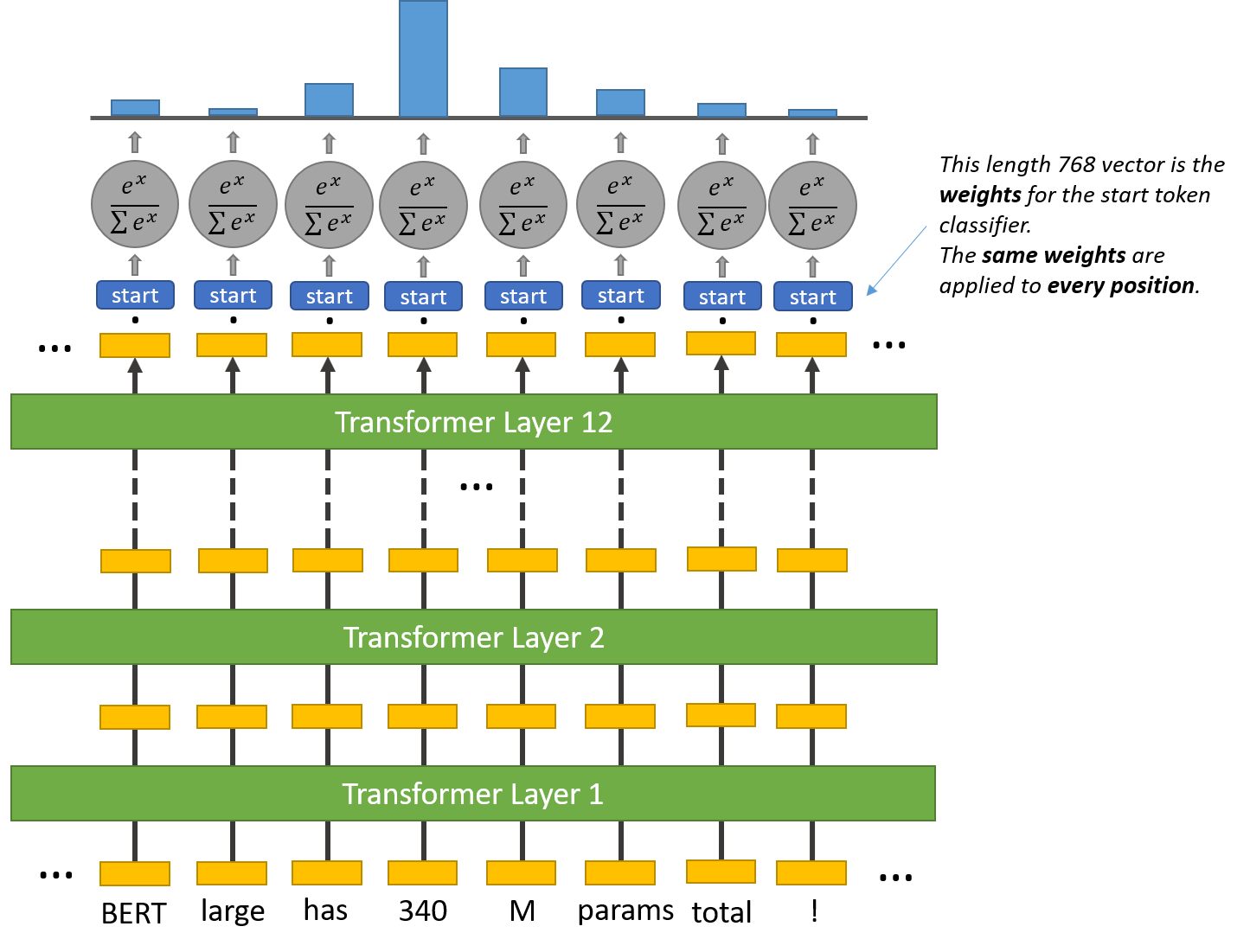

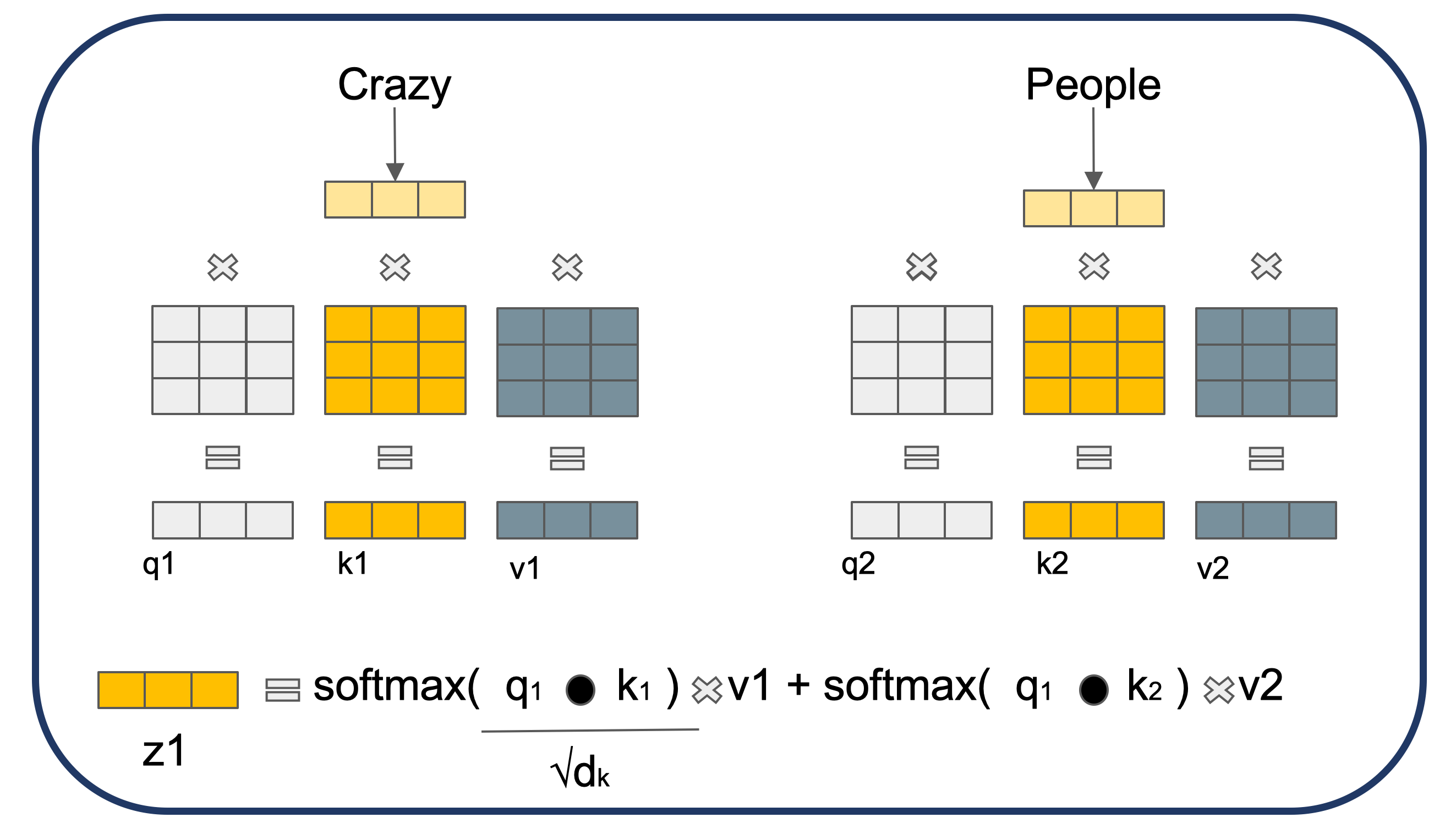

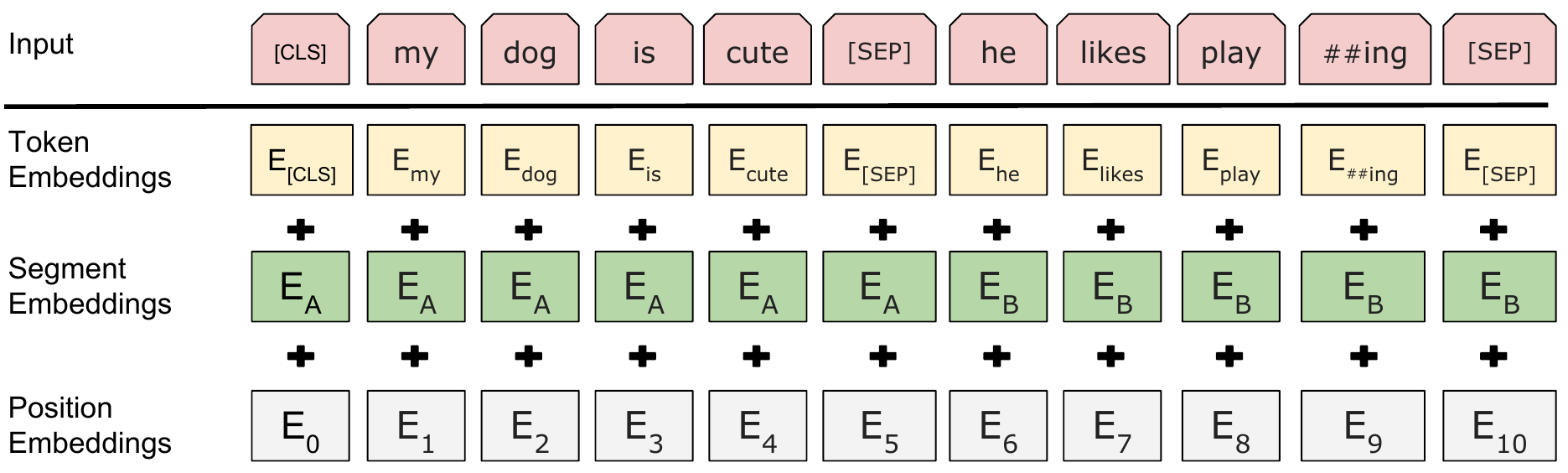

15.8. Bidirectional Encoder Representations from Transformers (BERT) — Dive into Deep Learning 1.0.0-beta0 documentation

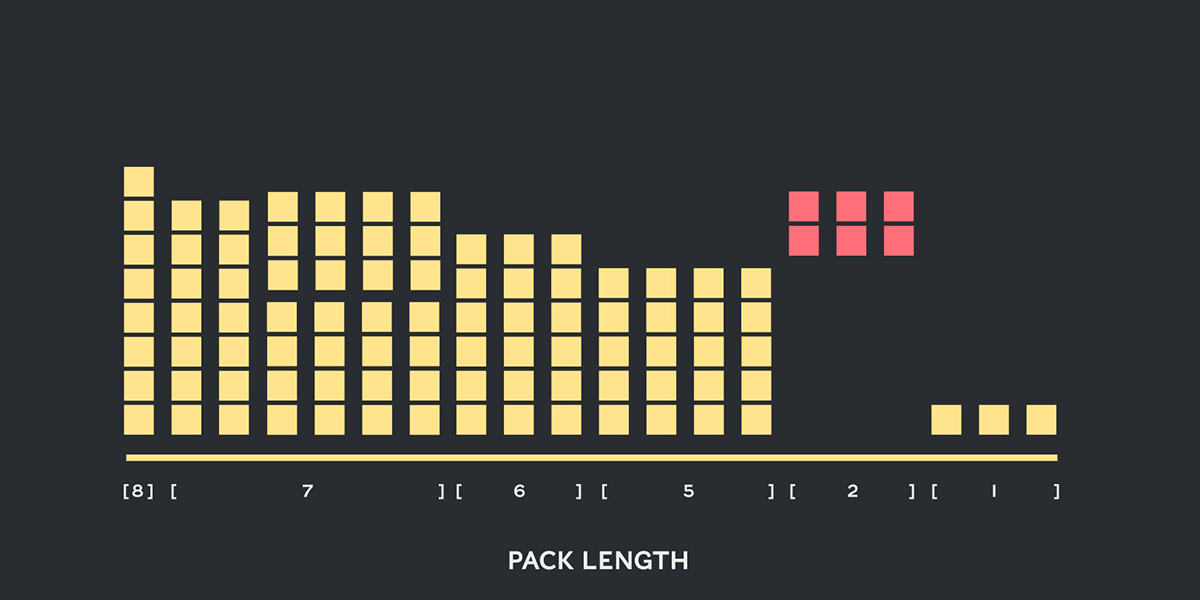

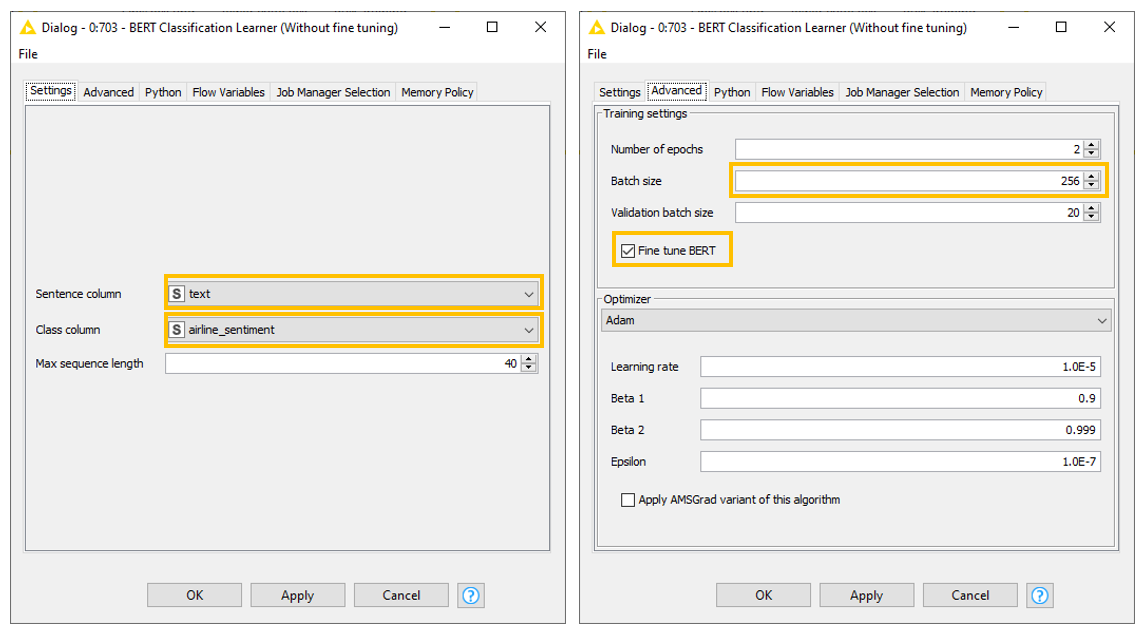

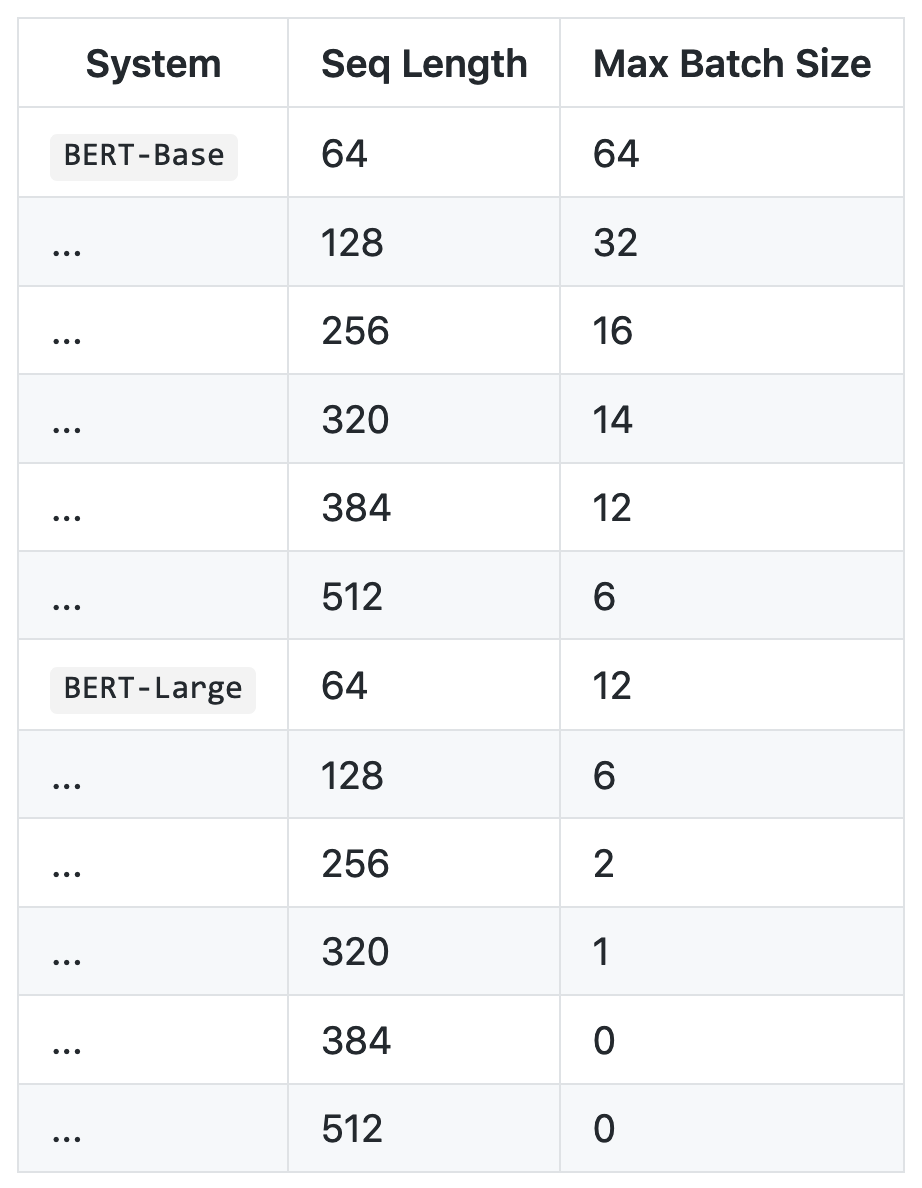

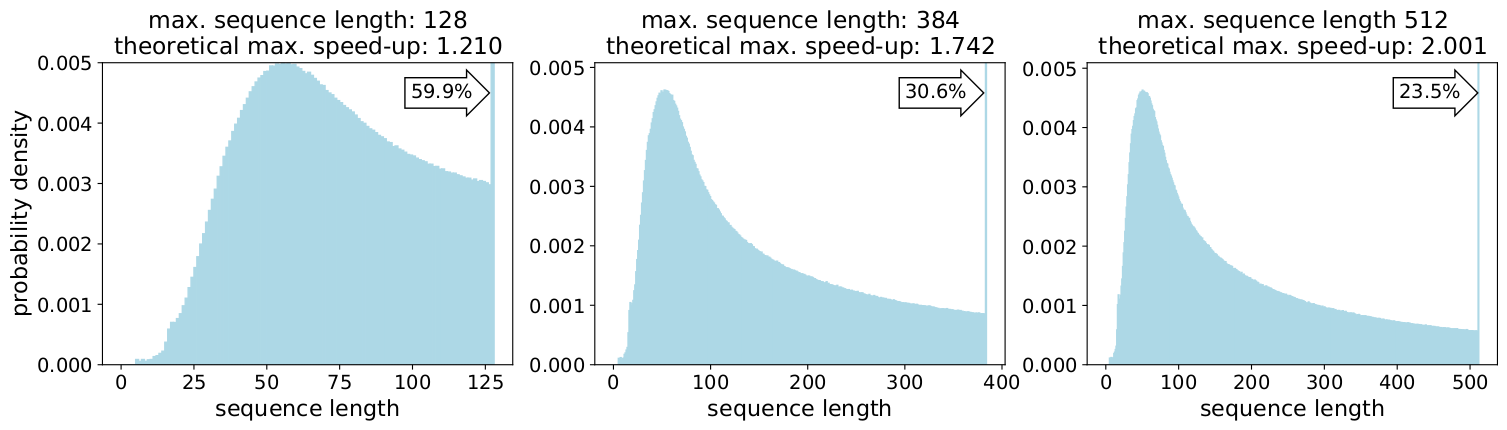

Introducing Packed BERT for 2x Training Speed-up in Natural Language Processing | by Dr. Mario Michael Krell | Towards Data Science

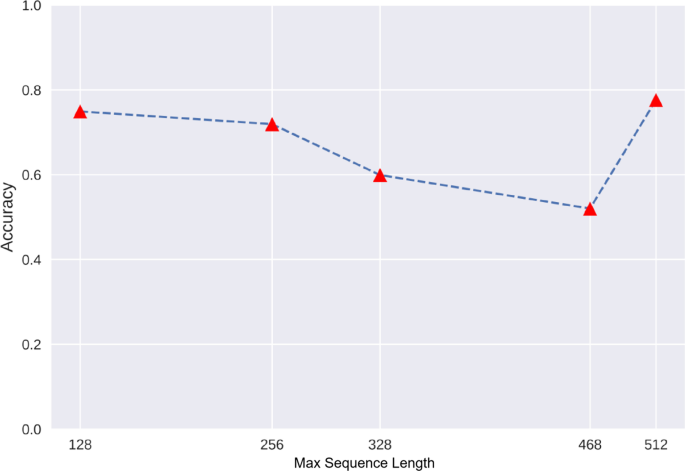

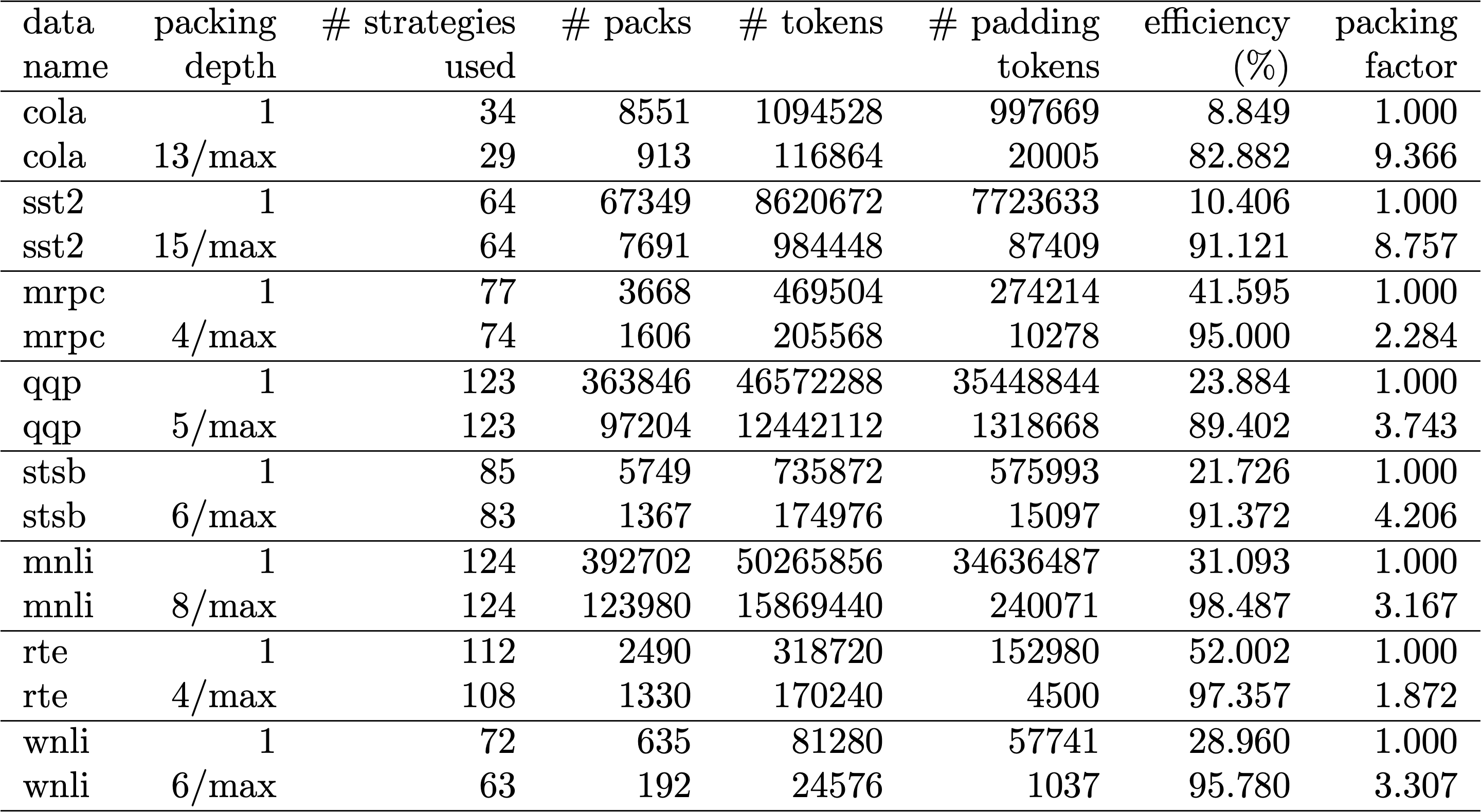

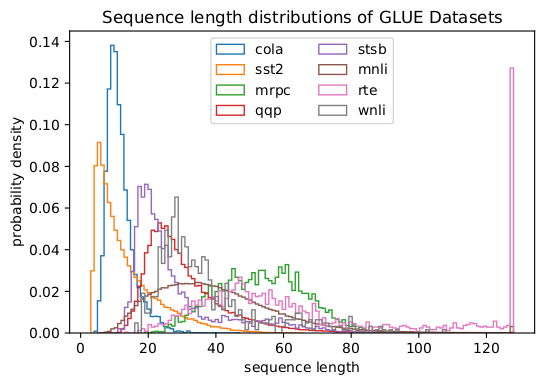

![PDF] Lifting Sequence Length Limitations of NLP Models using Autoencoders | Semantic Scholar PDF] Lifting Sequence Length Limitations of NLP Models using Autoencoders | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/2b1d6724786d6b5cdd38b0f8556bc9fa7ea8fa1b/7-Table3-1.png)