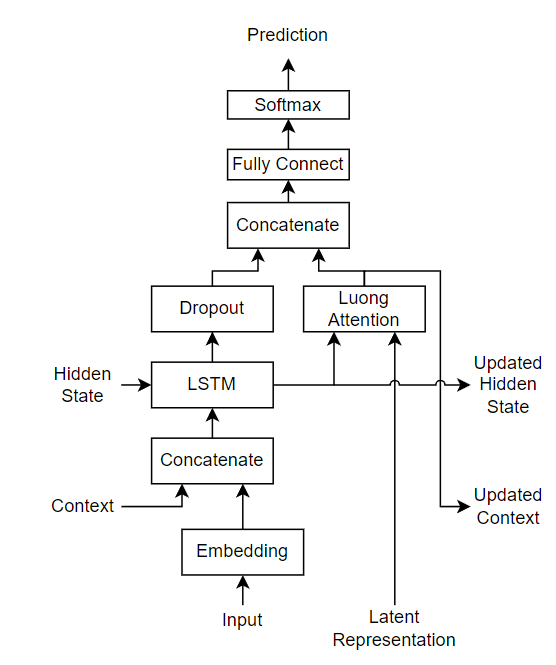

A Sequence-to-Sequence Approach for Remaining Useful Lifetime Estimation Using Attention-augmented Bidirectional LSTM - ScienceDirect

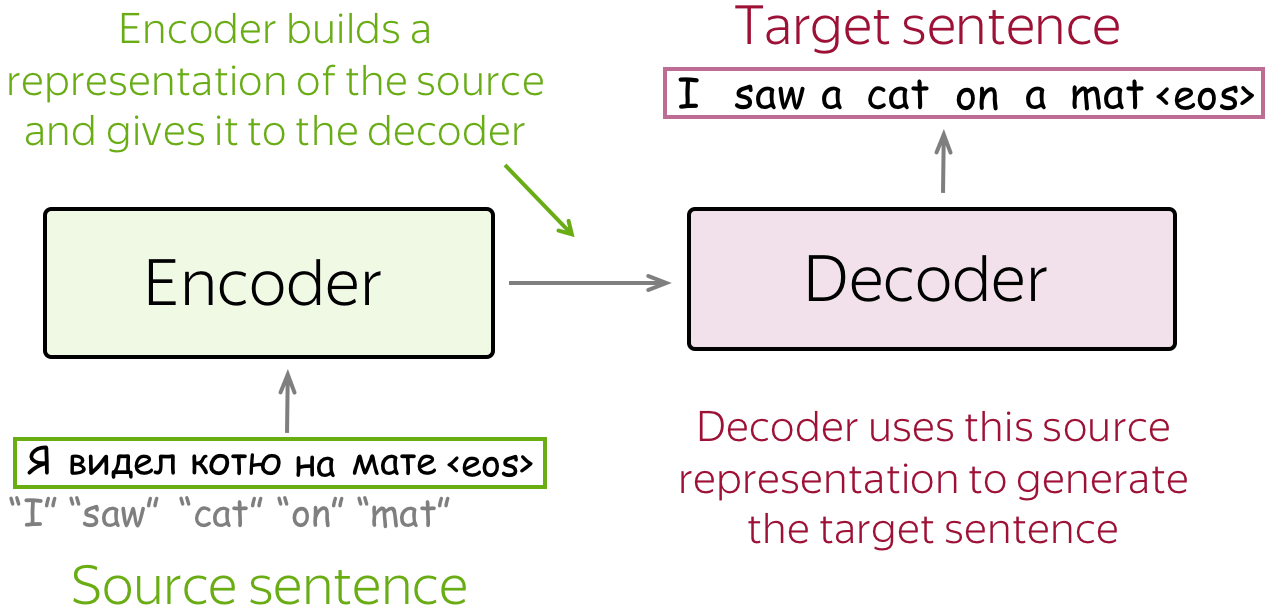

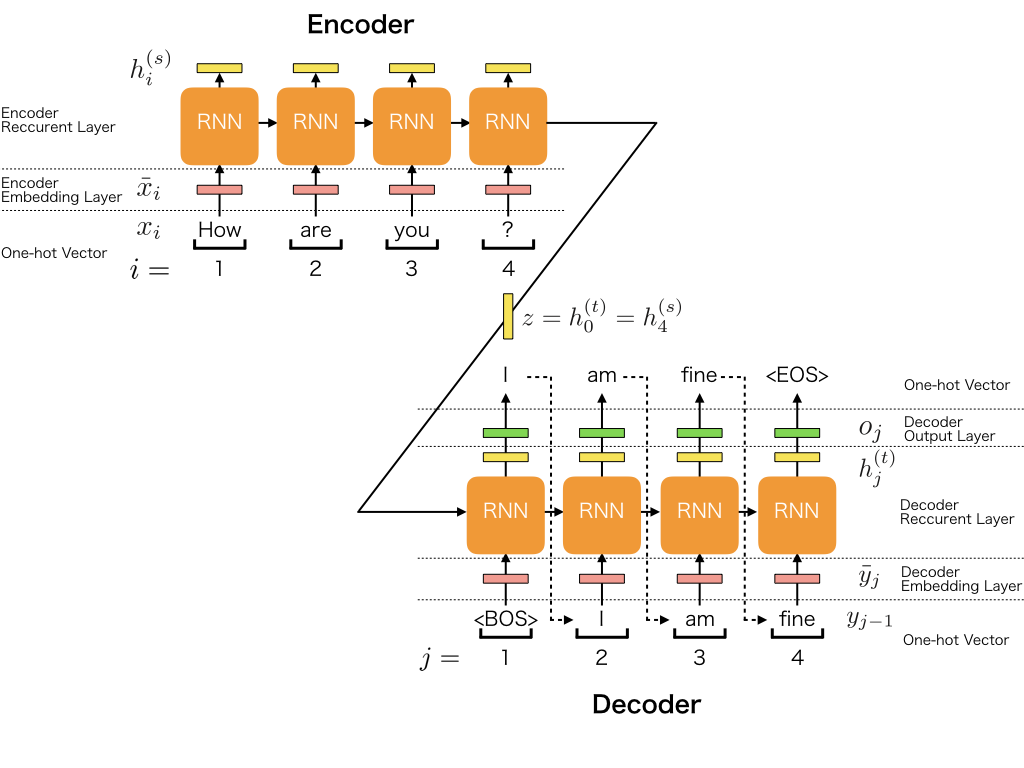

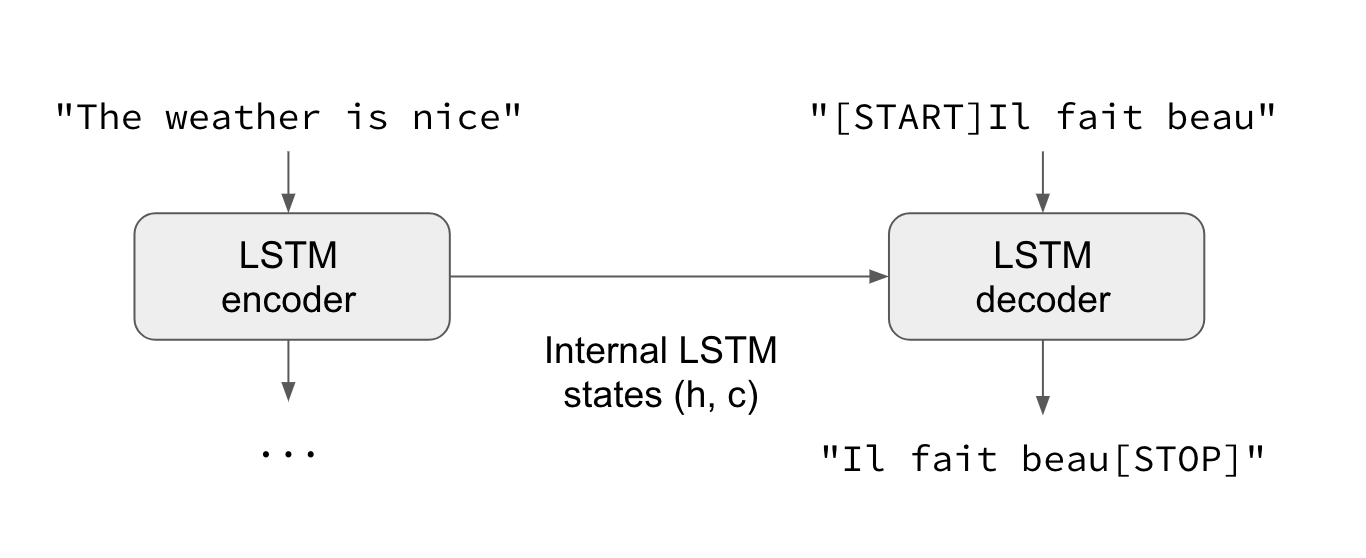

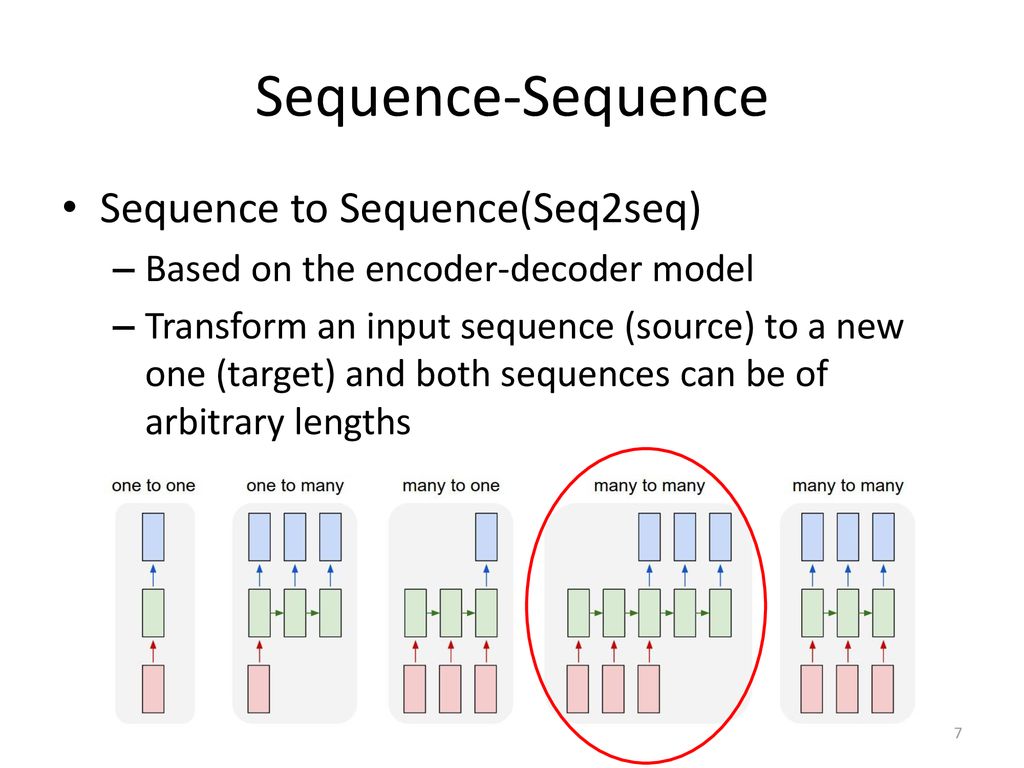

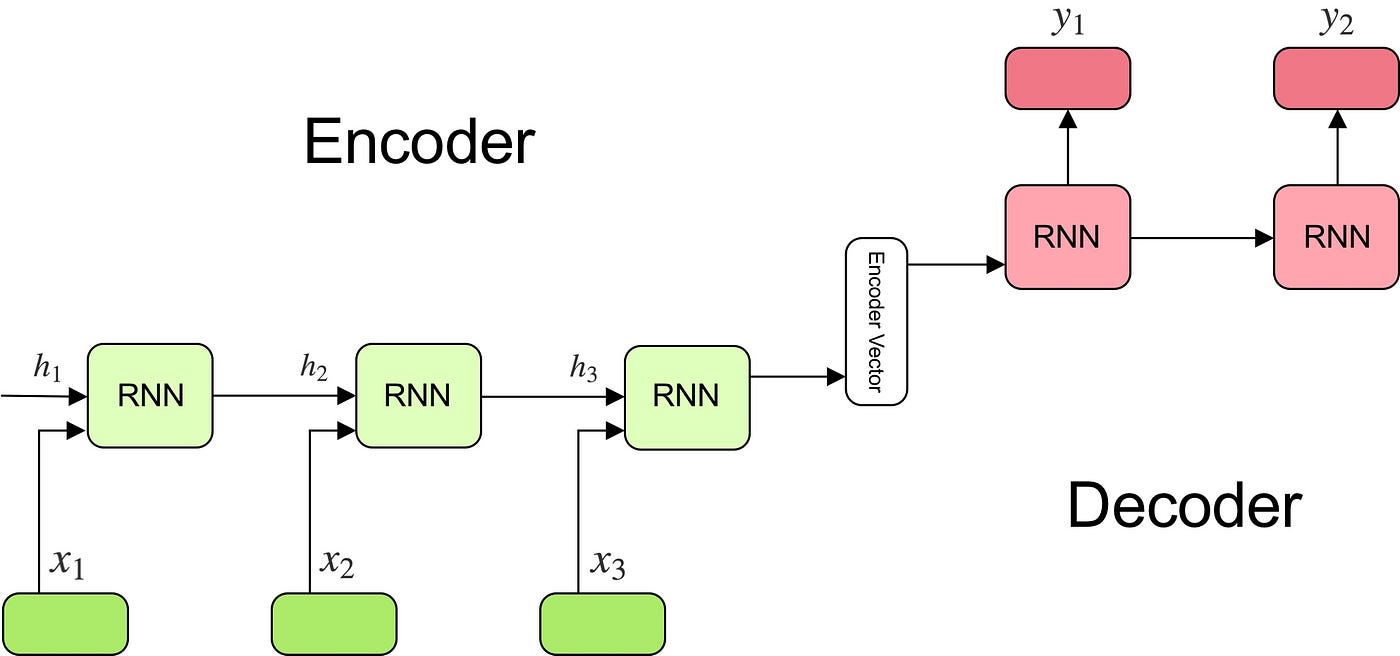

Understanding Encoder-Decoder Sequence to Sequence Model | by Simeon Kostadinov | Towards Data Science

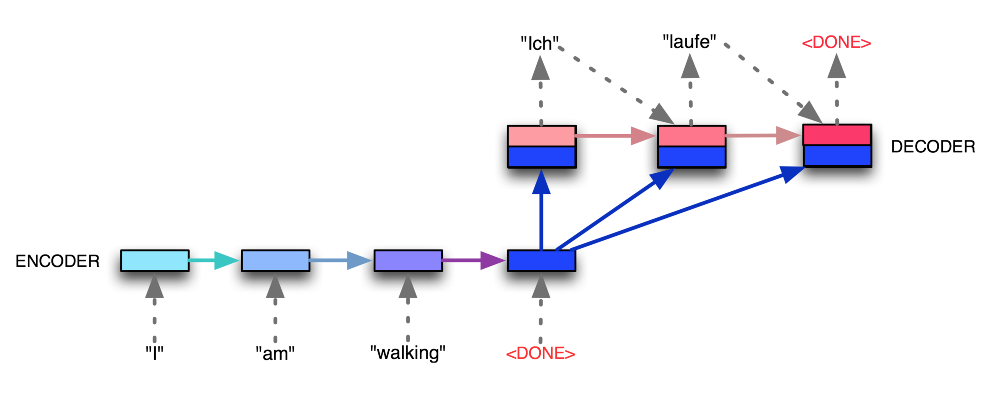

An example of sequence-to-sequence model with attention. Calculation of... | Download Scientific Diagram

Deep Natural Language Processing: Einstieg in Word Embedding, Sequence-to- Sequence-Modelle und Transformer mit Python : Hirschle, Jochen: Amazon.de: Bücher

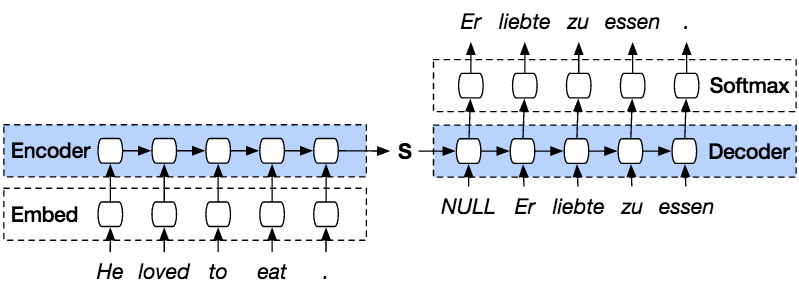

NLP From Scratch: Translation with a Sequence to Sequence Network and Attention — PyTorch Tutorials 2.0.1+cu117 documentation

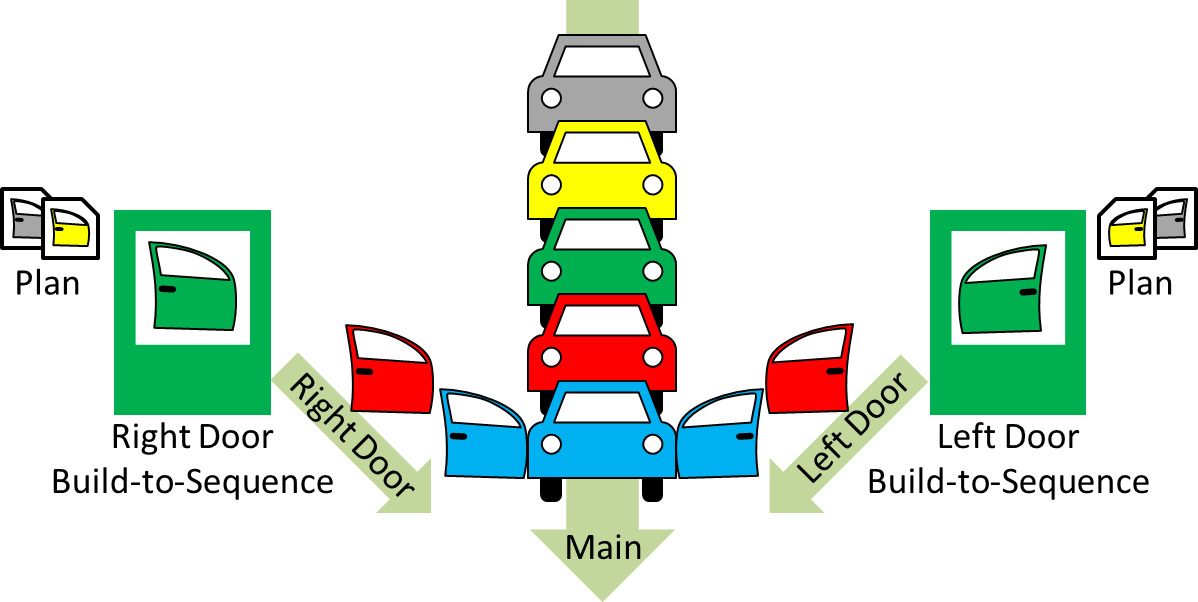

Permutation Invariant Graph-to-Sequence Model for Template-Free Retrosynthesis and Reaction Prediction | Journal of Chemical Information and Modeling

Introducing tf-seq2seq: An Open Source Sequence-to-Sequence Framework in TensorFlow – Google AI Blog

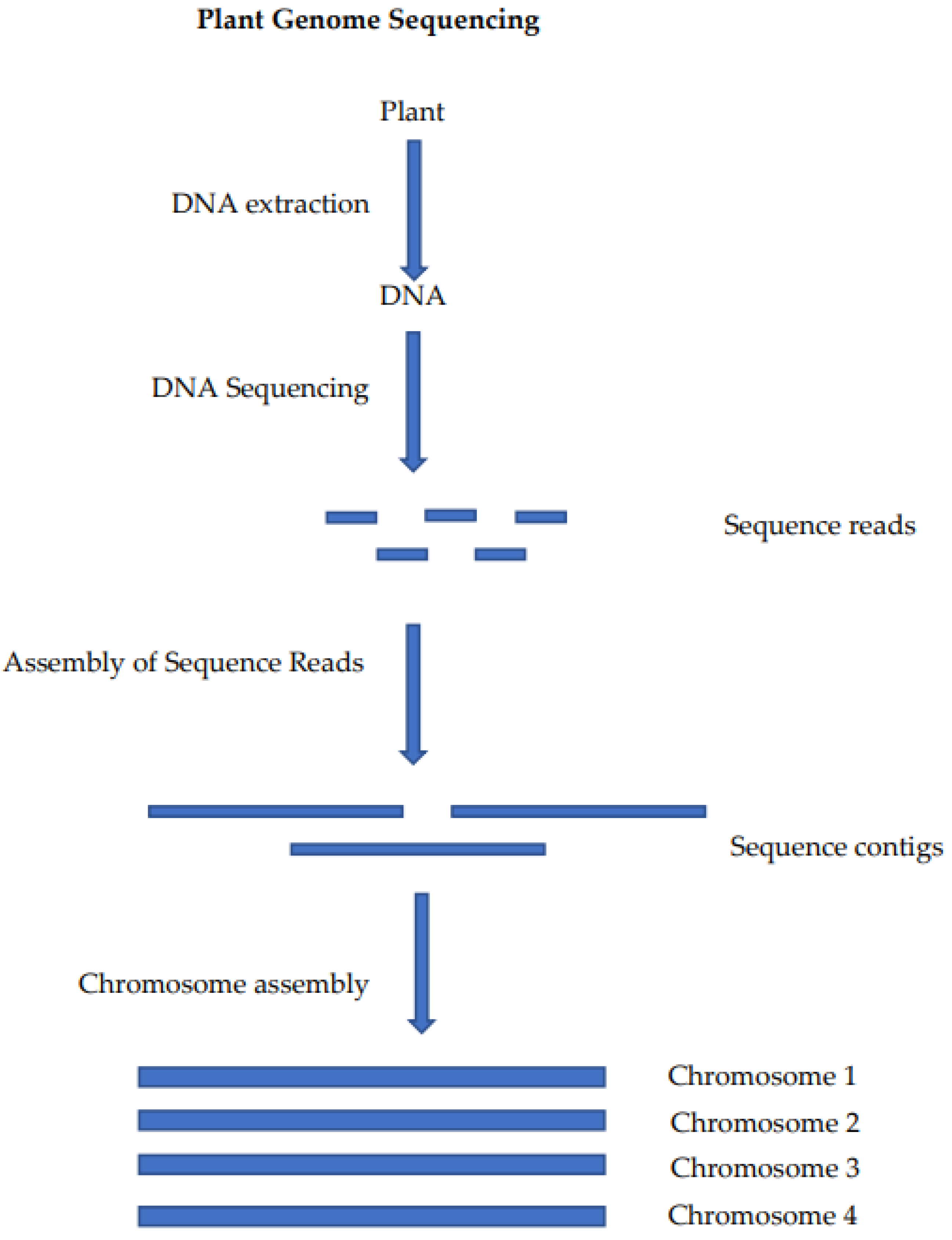

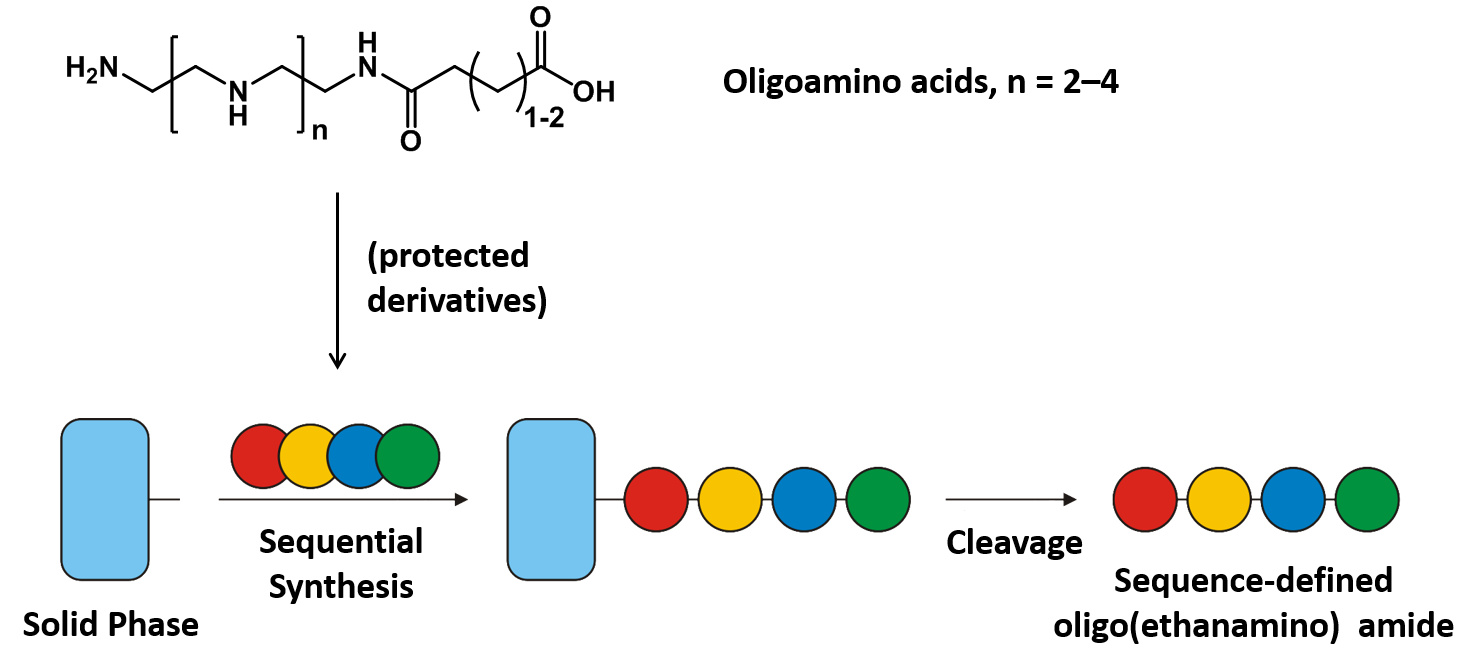

Pharmaceutical Biotechnology - Faculty for Chemistry and Pharmacy - Sequence-defined oligo(ethanamino) amides